A company wants to build a pipeline to update the standard AMI monthly. The AMI must be updated to use the most recent patches to ensure that launched Amazon EC2 instances are up to date. Each new AMI must be available to all AWS accounts in the company ' s organization in AWS Organizations.

The company needs to configure an automated pipeline to build the AMI.

Which solution will meet these requirements with the MOST operational efficiency?

A company is building a new pipeline by using AWS CodePipeline and AWS CodeBuild in a build account. The pipeline consists of two stages. The first stage is a CodeBuild job to build and package an AWS Lambda function. The second stage consists of deployment actions that operate on two different AWS accounts a development environment account and a production environment account. The deployment stages use the AWS Cloud Format ion action that CodePipeline invokes to deploy the infrastructure that the Lambda function requires.

A DevOps engineer creates the CodePipeline pipeline and configures the pipeline to encrypt build artifacts by using the AWS Key Management Service (AWS KMS) AWS managed key for Amazon S3 (the aws/s3 key). The artifacts are stored in an S3 bucket When the pipeline runs, the Cloud Formation actions fail with an access denied error.

Which combination of actions must the DevOps engineer perform to resolve this error? (Select TWO.)

A software engineering team is using AWS CodeDeploy to deploy a new version of an application. The team wants to ensure that if any issues arise during the deployment, the process can automatically roll back to the previous version.

During the deployment process, a health check confirms the application ' s stability. If the health check fails, the deployment must revert automatically.

Which solution will meet these requirements?

A company has multiple AWS accounts. The company uses AWS IAM Identity Center that is integrated with a third-party SAML 2.0 identity provider (IdP) .

The attributes for access control feature is enabled in IAM Identity Center. The attribute mapping list maps the department key from the IdP to the ${path:enterprise.department} attribute. All existing Amazon EC2 instances have a d1 , d2 , or d3 department tag that corresponds to three of the company’s departments.

A DevOps engineer must create policies based on the matching attributes. The policies must grant each user access to only the EC2 instances that are tagged with the user’s respective department name.

Which condition key should the DevOps engineer include in the custom permissions policies to meet these requirements?

A company manages multiple AWS accounts in AWS Organizations. The company ' s security policy states that AWS account root user credentials for member accounts must not be used. The company monitors access to the root user credentials.

A recent alert shows that the root user in a member account launched an Amazon EC2 instance. A DevOps engineer must create an SCP at the organization ' s root level that will prevent the root user in member accounts from making any AWS service API calls.

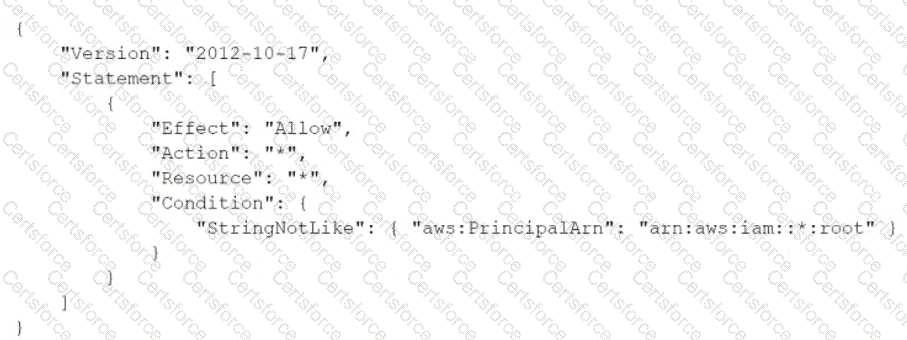

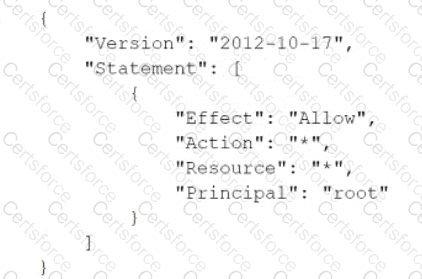

Which SCP will meet these requirements?

A)

B)

C)

D)

A DevOps engineer needs to implement a CI/CD pipeline that uses AWS CodeBuild to run a test suite. The test suite contains many test cases and takes a long time to finish running. The DevOps engineer wants to reduce the duration to run the tests. However, the DevOps engineer still wants to generate a single test report for all the test cases.

Which solution will meet these requirements?

A company wants to use a grid system for a proprietary enterprise m-memory data store on top of AWS. This system can run in multiple server nodes in any Linux-based distribution. The system must be able to reconfigure the entire cluster every time a node is added or removed. When adding or removing nodes an /etc./cluster/nodes config file must be updated listing the IP addresses of the current node members of that cluster.

The company wants to automate the task of adding new nodes to a cluster.

What can a DevOps engineer do to meet these requirements?

A company requires an RPO of 2 hours and an RTO of 10 minutes for its data and application at all times. An application uses a MySQL database and Amazon EC2 web servers. The development team needs a strategy for failover and disaster recovery.

Which combination of deployment strategies will meet these requirements? (Select TWO.)

A company is running its ecommerce website on AWS. The website is currently hosted on a single Amazon EC2 instance in one Availability Zone. A MySQL database runs on the same EC2 instance. The company needs to eliminate single points of failure in the architecture to improve the website ' s availability and resilience. Which solution will meet these requirements with the LEAST configuration changes to the website?

A company uses Amazon S3 to store proprietary information. The development team creates buckets for new projects on a daily basis. The security team wants to ensure that all existing and future buckets have encryption logging and versioning enabled. Additionally, no buckets should ever be publicly read or write accessible.

What should a DevOps engineer do to meet these requirements?