During model training, a portion of the dataset (commonly 70–80%) is used to teach the machine learning algorithm to identify patterns and relationships between input features and the output label. The remaining data (usually 20–30%) is held back to evaluate the model’s performance and verify its accuracy on unseen data. This ensures the model is not overfitted (too tightly fitted to training data) and can generalize well to new inputs.

Key steps highlighted in Microsoft Learn materials:

Model Training: Use the training data to fit the model — the algorithm learns relationships between input features and labels.

Model Evaluation: Use the test or validation data to assess the accuracy, precision, recall, or other metrics of the trained model.

Model Deployment: Once validated, the model is deployed to make real-world predictions.

Other options explained:

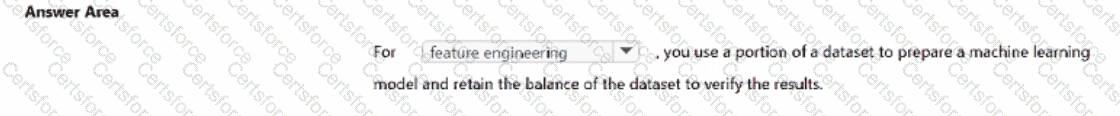

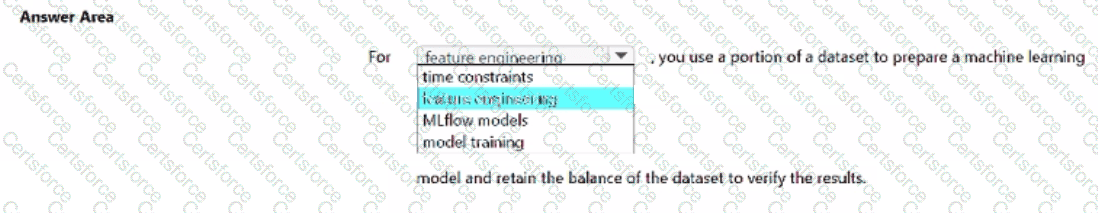

Feature engineering: Involves preparing and transforming input data, not splitting datasets for training and testing.

Time constraints: Not a machine learning process step.

Feature stripping: Not a recognized ML concept.

MLflow models: Refers to an open-source tool for tracking and managing models, not dataset splitting or training.

Thus, when you use a portion of the dataset to prepare and train a machine learning model, and retain the rest to verify results, the process is known as model training.

Submit