According to the Microsoft Azure AI Fundamentals (AI-900) official study guide and the Microsoft Learn module “Describe features of natural language processing (NLP) workloads on Azure,” Natural Language Processing refers to the branch of AI that enables computers to interpret, understand, and generate human language. One of the main NLP workloads identified by Microsoft is speech-to-text conversion, which transforms spoken words into written text.

Creating a text transcript of a voice recording perfectly fits this definition because it involves converting audio language data into text form — a process handled by speech recognition models. These models analyze the acoustic features of human speech, segment phonemes, identify words, and produce a text transcript. On Azure, this function is implemented using the Azure Cognitive Services Speech-to-Text API, part of the Language and Speech services.

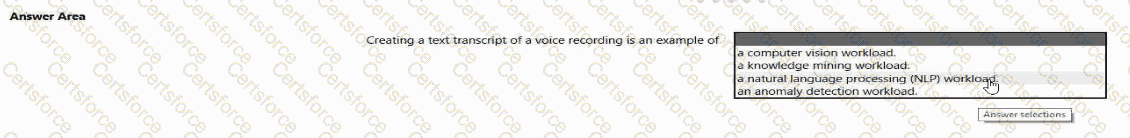

Let’s examine the other options to clarify why they are incorrect:

Computer vision workload: Involves interpreting and analyzing visual data such as images and videos (e.g., object detection, facial recognition). It does not deal with speech or audio.

Knowledge mining workload: Refers to extracting useful information from large amounts of structured and unstructured data using services like Azure Cognitive Search, not transcribing audio.

Anomaly detection workload: Involves identifying unusual patterns in data (e.g., fraud detection or sensor anomalies), unrelated to language or speech.

In summary, when a system creates a text transcript from spoken audio, it is performing a speech recognition task—classified under Natural Language Processing (NLP). This workload helps make spoken content searchable, analyzable, and accessible, aligning with Microsoft’s Responsible AI goal of enhancing accessibility through language understanding.

Submit