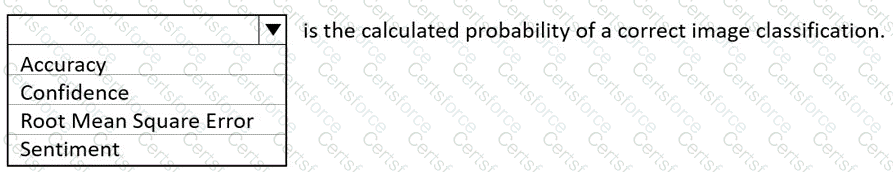

Confidence.

According to the Microsoft Azure AI Fundamentals (AI-900) Official Study Guide and the Microsoft Learn module “Explore computer vision in Microsoft Azure,” the confidence score represents the calculated probability that a model’s prediction is correct. In image classification, when an AI model analyzes an image and assigns it to a specific category, it also produces a confidence value—a numerical probability (usually between 0 and 1) indicating how certain the model is about its prediction.

For example, if an image classification model identifies an image as a “cat” with a confidence of 0.92, it means the model is 92% certain that the image depicts a cat. The confidence value helps developers and users understand the model’s certainty level about its classification output.

Microsoft Learn emphasizes that in Azure Cognitive Services—such as the Custom Vision Service—each prediction result includes both the predicted label (class) and a confidence score. These confidence scores are essential for evaluating model performance and determining thresholds for automated decisions (e.g., accepting predictions only above a 0.8 probability).

Let’s evaluate the other options:

Accuracy: This is an overall performance metric measuring the percentage of correct predictions across the dataset, not a probability for a single prediction.

Root Mean Square Error (RMSE): This is a metric for regression models, not classification tasks. It measures average error magnitude between predicted and actual values.

Sentiment: This is a type of prediction (positive, negative, neutral) in text analysis, not a probability metric.

Therefore, based on Microsoft’s AI-900 training materials and Azure Cognitive Services documentation, the calculated probability of a correct image classification is called Confidence, which expresses how sure the model is about its prediction for a specific input.

Submit