In the Microsoft Azure AI Fundamentals (AI-900) curriculum, computer vision capabilities refer to artificial intelligence systems that can analyze and interpret visual content such as images and videos. The Azure AI Vision and Face API services provide pretrained models for detecting, recognizing, and analyzing visual information, enabling developers to build intelligent applications that understand what they " see. "

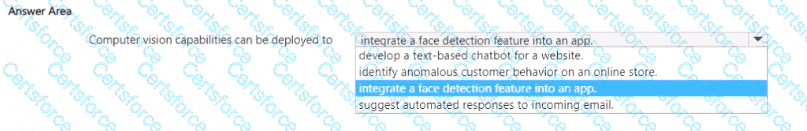

When asked how computer vision capabilities can be deployed, the correct answer is to integrate a face detection feature into an app. This aligns with Microsoft Learn’s module “Describe features of computer vision workloads,” which explains that computer vision can identify objects, classify images, detect faces, and extract text (OCR). The Face API, a part of Azure AI Vision, specifically provides face detection, verification, and emotion recognition capabilities.

Integrating these services into an application allows it to perform actions such as:

Detecting human faces in photos or video streams.

Recognizing facial attributes like age, emotion, or head pose.

Enabling secure authentication based on face recognition.

The other options are incorrect because they relate to different AI workloads:

Develop a text-based chatbot for a website: This falls under Conversational AI, implemented with Azure Bot Service or Conversational Language Understanding (CLU).

Identify anomalous customer behavior on an online store: This task relates to machine learning and anomaly detection models, not computer vision.

Suggest automated responses to incoming email: This uses Natural Language Processing (NLP) capabilities, not visual analysis.

Therefore, the correct and Microsoft-verified completion of the statement is:

“Computer vision capabilities can be deployed to integrate a face detection feature into an app.”

Submit